Just How Smart Are Smart Machines?

The number of sophisticated cognitive technologies that might be capable of cutting into the need for human labor is expanding rapidly. But linking these offerings to an organization’s business needs requires a deep understanding of their capabilities.

Topics

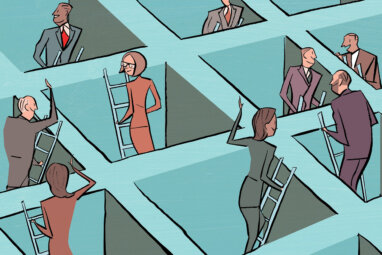

If popular culture is an accurate gauge of what’s on the public’s mind, it seems everyone has suddenly awakened to the threat of smart machines. Several recent films have featured robots with scary abilities to outthink and manipulate humans. In the economics literature, too, there has been a surge of concern about the potential for soaring unemployment as software becomes increasingly capable of decision making. Yet managers we talk to don’t expect to see machines displacing knowledge workers anytime soon — they expect computing technology to augment rather than replace the work of humans. In the face of a sprawling and fast-evolving set of opportunities, their challenge is figuring out what forms the augmentation should take. Given the kinds of work managers oversee, what cognitive technologies should they be applying now, monitoring closely, or helping to build?

To help, we have developed a simple framework that plots cognitive technologies along two dimensions. (See “What Today’s Cognitive Technologies Can — and Can’t — Do.”) First, it recognizes that these tools differ according to how autonomously they can apply their intelligence. On the low end, they simply respond to human queries and instructions; at the (still theoretical) high end, they formulate their own objectives. Second, it reflects the type of tasks smart machines are being used to perform, moving from conventional numerical analysis to performance of digital and physical tasks in the real world. The breadth of inputs and data types in real-world tasks makes them more complex for machines to accomplish.

By putting those two dimensions together, we create a matrix into which we can place all of the multitudinous technologies known as “smart machines.” More important, this helps to clarify today’s limits to machine intelligence and the challenges technology innovators are working to overcome next. Depending on the type of task a manager is targeting for redesigned performance, this framework reveals the various extents to which it might be performed autonomously and by what kinds of machines.

Four Levels of Intelligence

Clearly, the level of intelligence of smart machines is increasing.