Can Surveillance AI Make the Workplace Safe?

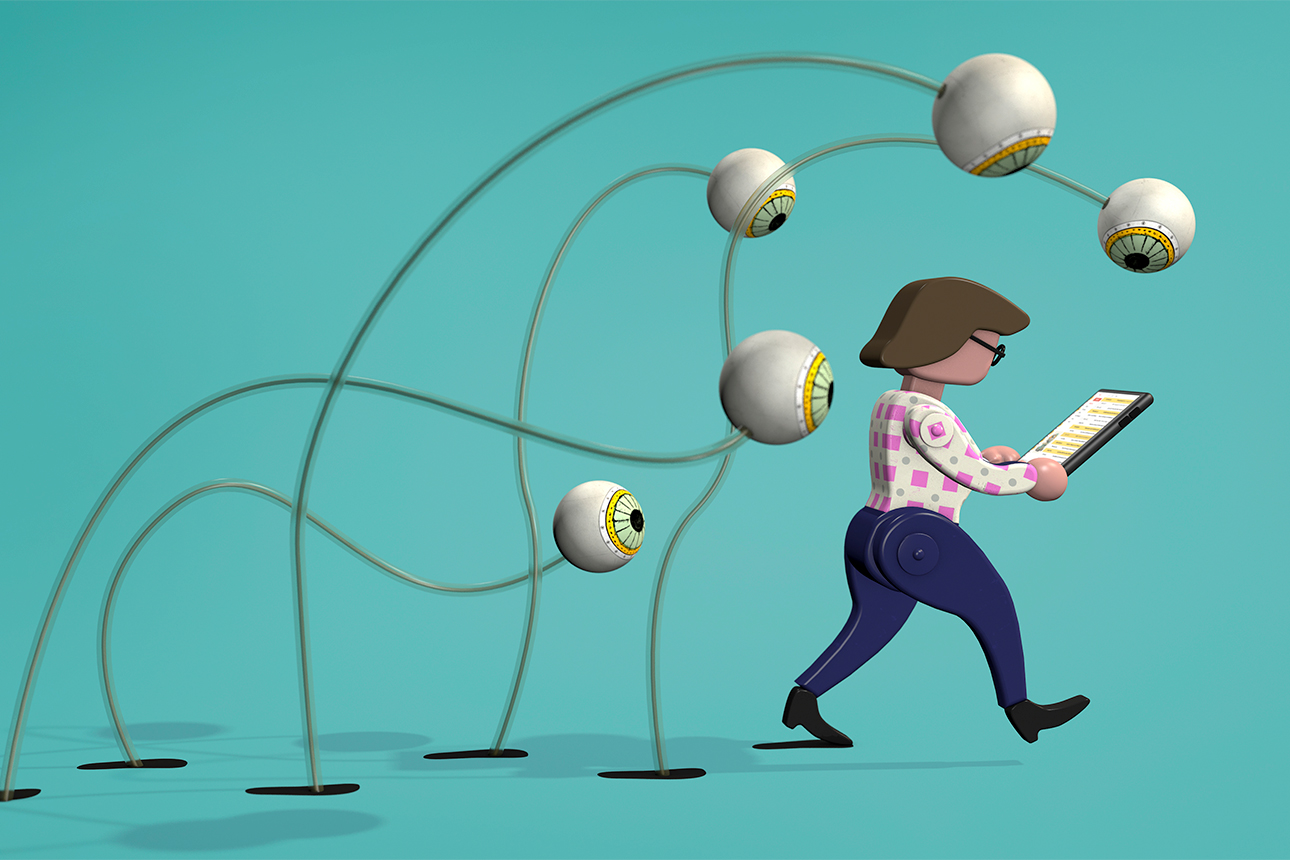

It’s possible to protect employees’ health and well-being by digitally monitoring their behavior, but how far should companies go?

Topics

Frontiers

Image courtesy of Richard Borge/theispot.com

As the world recovers from the initial shock wave caused by the COVID-19 pandemic, businesses are preparing for their transitions back to their physical workplaces. In most cases, they are opening up gradually, with an unprecedented focus on keeping workers safe as they return. To protect employees’ health and well-being, organizations must systematically reengineer their workspaces. This may include reconfiguring offices, rearranging desks, changing people’s shifts to minimize crowding, and allowing people to work remotely long term. Then there are the purely medical measures, such as regular temperature checks, the provision of face masks and other personal protective equipment, and even onsite doctors. Such precautions would have seemed extreme a short time ago but are quickly becoming accepted practices.

Soon, organizations may also start monitoring employees’ whereabouts and behavior more closely than ever, using surveillance tools such as cellphone apps, office sensors, and algorithms that scrape and analyze the vast amounts of data people produce as they work. Even when they are not in the office, workers generate a sea of data through their interactions on email, Slack, text and instant messaging platforms, videoconferences, and the still-not-extinct phone call. With the help of AI, this data can be translated into automated, real-time diagnostics that gauge people’s health and well-being, their current risk levels, and their likelihood of future risk. A parallel here is the use of various surveillance measures by many governments — with some success — to contain the pandemic (in China, Israel, Singapore, and Australia, for instance). Most notably, track-and-trace tools have been deployed to follow people’s every move, with the intention of isolating infected individuals and reducing contagion.1

Get Updates on Leading With AI and Data

Get monthly insights on how artificial intelligence impacts your organization and what it means for your company and customers.

Please enter a valid email address

Thank you for signing up

Technological surveillance is always controversial, not least for being out of sync with the basic values and tenets underpinning free and democratic societies. Indeed, the idea of using AI to do previously “human” tasks is already quite polarizing; for many of us, the notion of also using it for surveillance adds an element of Orwellian creepiness.

And yet, even in free and democratic societies, we have already given away so much personal data in the digital age that the concept of privacy has been greatly devalued and diluted.

References

1. S. Bond, “Apple and Google Build Smartphone Tool to Track COVID-19,” NPR, April 10, 2020, www.npr.org.

2. J. Bersin and T. Chamorro-Premuzic, “New Ways to Gauge Talent and Potential,” MIT Sloan Management Review 60, no. 2 (winter 2019): 7-10; and H. Schellmann, “How Job Interviews Will Transform in the Next Decade,” The Wall Street Journal, Jan. 7, 2020, www.wsj.com.

3. R.A. Calvo, D.N. Milne, M.S. Hussain, et al., “Natural Language Processing in Mental Health Applications Using Non-Clinical Texts,” Natural Language Engineering 23, no. 5 (September 2017): 649-685.

4. P. Wlodarczak, J. Soar, and M. Ally, “Multimedia Data Mining Using Deep Learning,” in “Fifth International Conference on Digital Information Processing and Communications” (Sierre, Switzerland: IEEE, 2015).

5. B. Dattner, T. Chamorro-Premuzic, R. Buchband, et al., “The Legal and Ethical Implications of Using AI in Hiring,” Harvard Business Review, April 25, 2019, https://hbr.org.

6. A. Agrawal, J. Gans, and A. Goldfarb, “Prediction Machines: The Simple Economics of Artificial Intelligence” (Boston: Harvard Business Review Press, 2018).